Learning Maths for the Last Time

A Hitchhiker's Guide to Hugging Face

Please give a follow and check out the co-authors, they have some pretty impressive stuff. Thanks for creating a space to talk about tiny models, without looking like fools. CompactAI is pretty cool ♥Howdy, it's me Shane again...

Long-form post today, settle in. I've been holding onto this story for a century and today's the day the lid comes off.

I have no business affording some of the datasets I've put out

That's the truth. Numbers don't add up on paper. How does some neanderthal trending on Hugging Face from a kitchen table burn the kind of compute I've been burning? You might've wondered. I would.

Last year I caught a gig.

Just dropped a fine-tune that scored stupidly high on EQ — the empathy benchmark, the one nobody really gambles on. A publishing outfit slid into my inbox a week later. They wanted books. Whole books. Cover-to-cover, beginning-middle-end, prompted out of Claude exactly like a publishing house would do it.

Can't tell you who. NDA's tight as a drum.

What I can tell you...

══════════════════════════════════════════════════

Charged to their card via Claude API:

$25,000+ USD

I architected every prompt.

60% of the data this ghost-writer uses today

is generated and curated by yours truly.

══════════════════════════════════════════════════

Unforgettable first job in AI. All thanks to one little model with a good heart on a benchmark nobody talks about. The kicker? I never expected anybody to actually use an EQ score for hiring. Joke's on me, I guess. Best joke I've ever been on.

From clanker hater to clanker maker

That gig flipped me. I never knew the clanker hater from a year ago would be the guy hollering full steam at his terminal at 3am... but here we are.

Real shift was figuring out I didn't want to use models anymore. I wanted to build them.

Tiny ones. Stupid-tiny ones. Small enough to fit on a 12 GB card. Small enough that every architectural decision is naked. Big enough to embarrass me when I get it wrong.

That's where FANT came in.

The culprit. The muse for all my AI creations from that point forward.

FANT 1, FANT 2, FANT 3. Every iteration weirder than the last. Each one a complete rewrite. Each one teaching me a piece of the puzzle I didn't know was missing.

The win that proved the weird stuff works

Here's what I keep relearning... the wins are never where the GPU brochure tells you they are. They're in the weird stuff.

So let me tell you about SleepGate.

SleepGate is a memory consolidation routine. Runs every 100 training steps. Sounds like nothing. Looks like nothing. Half a screen of code, most of it comments.

But on FANT 2 at 5 million parameters, on a 1,000-problem procedural-math eval, it does this:

Five and a third points. Off one architectural decision. At 5 million parameters. Without changing the optimizer, the data, or the schedule.

That's the kind of finding that ruins your weekend, because suddenly your todo list ain't implement the next feature, it's implement the next feature AND figure out why a tiny consolidation pass moved the needle five points.

That's the kind of moment FANT was built for.

This is progress.

The new weird thing — SpinorApollonian Memory

The next experiment is even less normal.

I read a paper by a Polish mathematician named Jerzy Kocik on tangency spinors — a way of classifying Apollonian disk packings using two-dimensional Minkowski spinors. (You think I'm making that up. I'm not. The Descartes circle theorem turns out to be the Minkowski quadratic form in signature (1,3). Math is wild.)

Then I stapled the construction onto FANT's memory router.

What it does in plain English... it splits memory writes by chirality. Left-spinning packs go to one bucket. Right-spinning packs go to another. Geometric routing instead of threshold routing. The failure mode I'd been chasing for two months — packs starving each other into uselessness — vanished overnight. Same pattern, every scale I tested, 5M up through 742M.

The math was right.

Sounds like peanut butter on a hamburger... but the ablation table says it works. Full design notes are in the FANT 3 repo if you want the deep dive.

Now — meet Sparrow

I've been doing other things, mainly Math. More about that to come.

Sparrow is the math model. He's analytical. A little stubborn. He does math like a grade 9 student — which, if you know me, is a victory, because last week he was doing math like a broken calculator, and the week before he was a malformed robot named Scamp.

Sparrow ain't FANT. Different skeleton, different router, different everything. He's small. He's surgical.

And — here's the kicker:

Across 1,900 evaluation questions, Sparrow scores 95.6%. Owl Alpha scores 61.4% on the same set. At 1,000,000 parameters.

One. Million. Parameters.

Checked it twice. Bothered three friends. Re-ran the eval at three temperatures. Same numbers every time. Unbelievable, right?

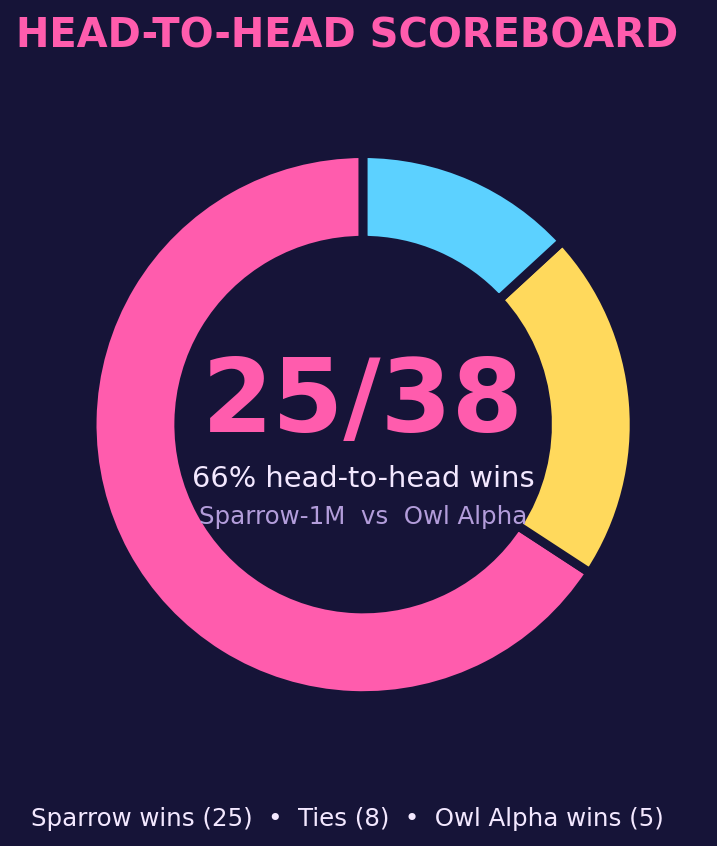

Here's the head-to-head over 38 evals (n=50 each, numeric scoring):

Sparrow ties or beats Owl Alpha on 33 of 38 head-to-heads (87%). The five losses are all on simple multiplication and division at digit-counts where Owl Alpha's training data is dense — fair fight, Owl wins clean.

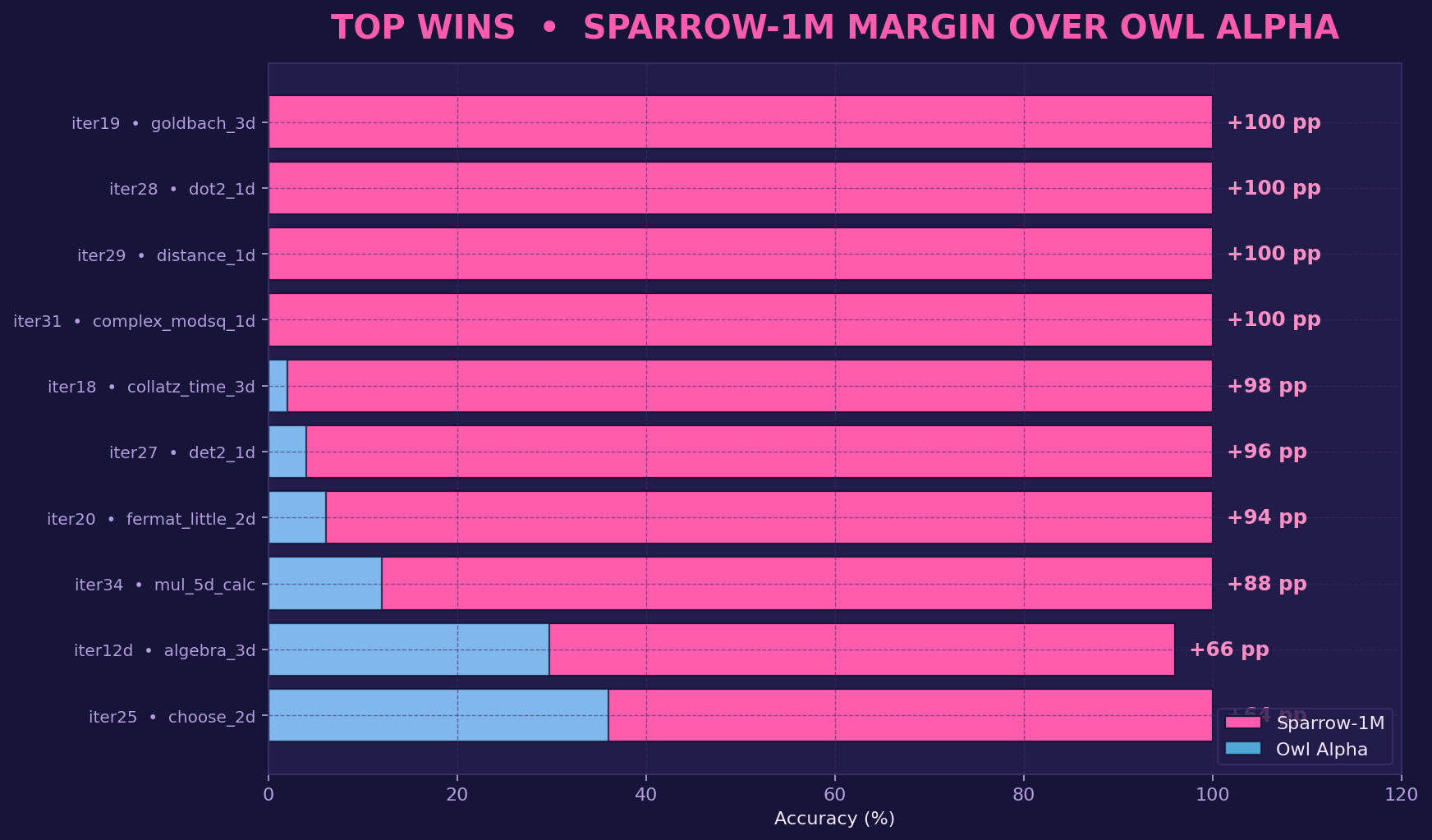

But on the interesting tasks — Goldbach, Collatz, Fermat-little, complex modulus, dot products, distance, determinants, calc-tag-aided 5-digit multiplication — Sparrow turns into a wood chipper:

+100 pp. +98 pp. +88 pp. A 1M-parameter byte-level toy beating a 70B-class frontier model on these tasks by margins you usually only see in a paper figure that turned out to be a bug.

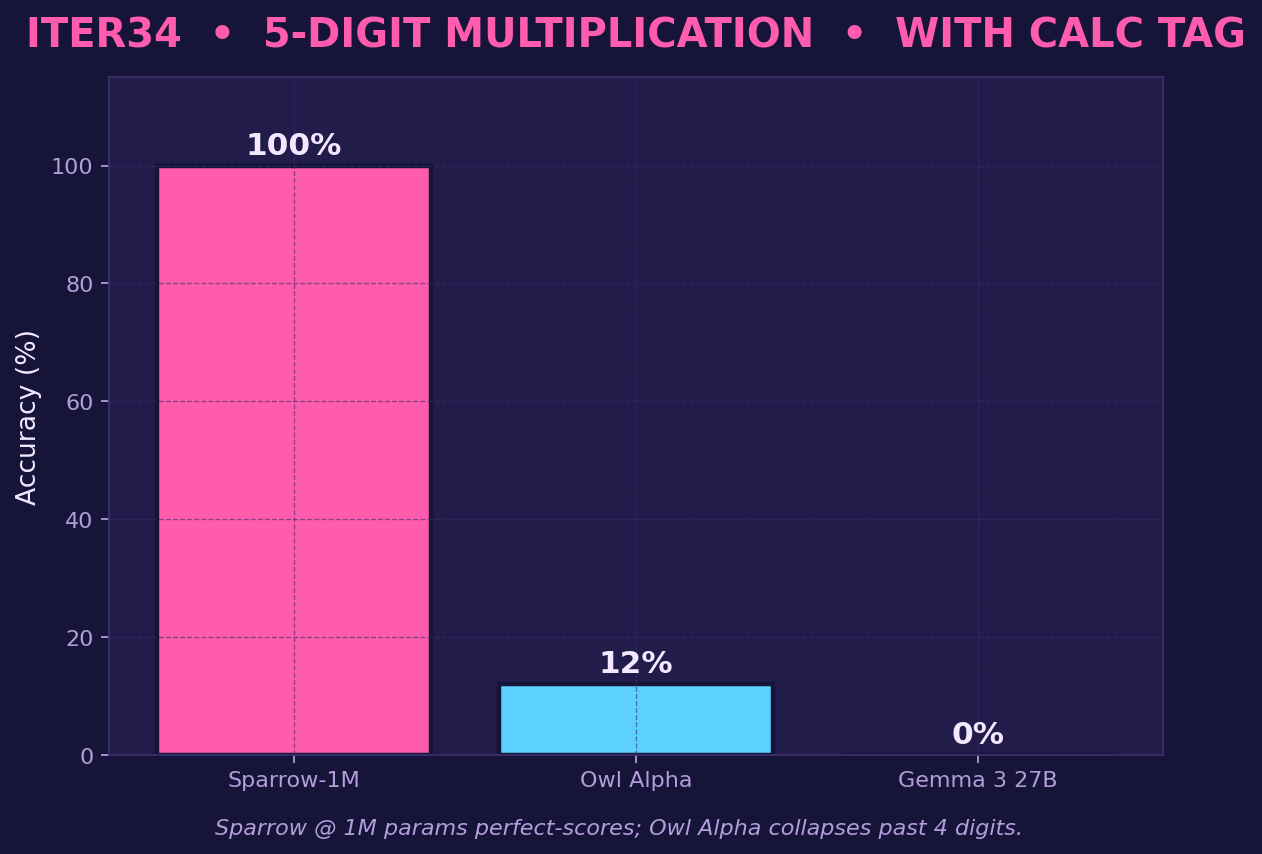

Here's the iter34 5-digit multiplication shot specifically — the one that made me check my eval harness three times before I'd believe it:

Owl Alpha at 12%. Gemma 3 27B at 0%. Sparrow at 100%. Calc-tag wrapper does the heavy lifting on the arithmetic — Sparrow learns to call it correctly. That's the trick.

Full scoreboard — all 38 head-to-heads (click to expand)

Each cluster is one iteration. Pink = Sparrow-1M, cyan = Owl Alpha (via OpenRouter), yellow = Gemma 3 27B (via OpenRouter). Numeric scoring, n=50 questions per eval, k=1.

The kicker to the kicker... I cannot get Sparrow's trick to play nice with FANT's architecture. Been at the fusion for weeks. I know there's a thread between them — something about how Sparrow handles symbolic state that should drop straight into FANT's recursion stack — but it has eluded me.

So I'm leaving the breadcrumbs in public, on the off chance one of you brilliant Hugging Face folks can finish what I couldn't. Repo's open. Issues are open. DMs are open.

Half-built spaceships are best shared.

What's next

Anyways. Sparrow needs more debugging before he's a model I'd hand to a stranger. Once he's clean, he ships under @CompactAI like the rest of my finished work.

I post about once a week now (daily was eating me alive). The discord is where the noise happens — nightly dataset drops, training-run hollering, whatever wild idea I'm chasing this week.

So long, and thanks for all the gradients.

-Shane

P.S Join the discord for the loud part: discord.gg/8ZscHNmJYE

P.P.S Finished work goes here: @CompactAI. The muse lives here: github.com/Crownelius/fant3