GPT 5.5 help me extensively extended the framework.

More detail may refer to OSF article:

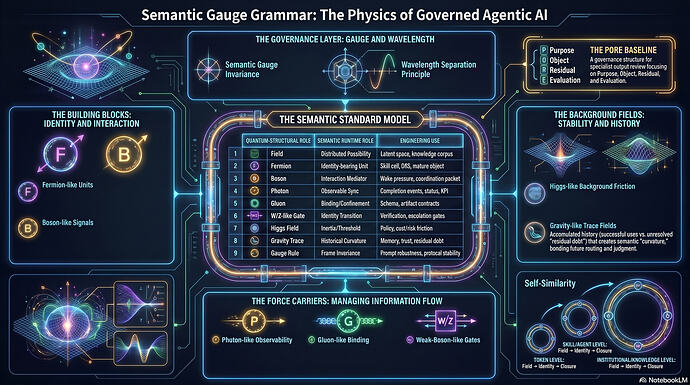

Semantic Gauge Grammar for Agentic AI: From Fermions and Bosons to Self-Similar Runtime Governance - A Quantum-Structural Design Grammar for Skills, Signals, Knowledge Objects, and Governed Decision Systems

Appendix A — Quantum-to-Semantic Layer Mapping Reference

A.1 The Five Semantic Runtime Levels

| Level |

Runtime Layer |

Main Unit |

Main Question |

| L0 |

Token / latent layer |

token, feature, activation pattern |

What continuation is locally selected? |

| L1 |

Skill / coordination-cell layer |

skill cell, artifact contract |

What bounded transformation just closed? |

| L2 |

Agent / DSS layer |

specialist system, domain agent |

Which domain identity is reasoning? |

| L3 |

Knowledge-management layer |

mature knowledge object |

What knowledge is bound, scoped, and reusable? |

| L4 |

Governance / institution layer |

governed judgment, residual ledger |

What decision is accountable? |

A.2 Master Mapping Table

| Quantum / physics element |

Functional role in physics |

L0 Token / latent layer |

L1 Skill layer |

L2 Agent / DSS layer |

L3 Knowledge layer |

L4 Governance layer |

Engineering meaning |

| Field |

Distributed condition over a domain |

latent semantic possibility space |

task possibility space |

domain problem space |

raw knowledge landscape |

competing institutional interpretations |

The space of possible meanings before closure |

| Wavefunction |

Encodes possible states and amplitudes |

next-token probability / latent state |

possible skill outcomes |

possible specialist interpretations |

possible knowledge object formulations |

possible judgments |

Structured possibility before selection |

| Superposition |

Multiple possible states coexist before measurement |

many token continuations remain possible |

several candidate transformations remain possible |

multiple domain readings coexist |

raw source admits multiple interpretations |

multiple policy / expert conclusions remain open |

Do not collapse too early |

| Projection / measurement |

Makes one aspect visible under a chosen setup |

prompt / context selects token path |

decomposition selects skill route |

active universe selects DSS frame |

indexing / schema exposes a knowledge structure |

PORE frame exposes Purpose / Object / Residual / Evaluation |

Observation path shapes what becomes visible |

| Observer |

Bounded apparatus or frame of measurement |

context window + model state |

skill cell with limited input/output |

DSS with domain boundary |

knowledge curator / maturity protocol |

governance layer / review board |

No system sees total reality; each sees through bounds |

| Collapse |

Possibility resolves into realized outcome |

token selected |

artifact produced |

specialist answer formed |

mature object created |

governed decision issued |

Closure event |

| Decoherence |

Coherent alternatives lose usable phase relation |

competing continuations become irrelevant |

unused routes decay |

alternative domain frames are dropped |

raw alternatives become background |

unresolved options become residual |

Soft possibility becomes practical commitment |

| Trace / worldline |

History of state evolution |

generated context |

execution log |

specialist reasoning path |

provenance / update history |

audit trail |

What happened must be replayable |

| Residual |

Remainder not absorbed by model / closure |

entropy / uncertainty |

failure marker / ambiguity |

boundary risk / missing evidence |

coverage gap |

residual debt / escalation packet |

Honest leftover after closure |

| Coarse-graining |

Compress lower-level detail into higher-level object |

tokens become phrases |

local outputs become artifacts |

artifacts become specialist answers |

raw sources become mature objects |

specialist outputs become institutional decisions |

Each level treats lower-level closure as object |

| Renormalization |

Re-express system at a new scale |

token patterns become concepts |

skill closures become workflow states |

DSS outputs become knowledge updates |

knowledge objects reshape future retrieval |

governance traces reshape policy |

Same grammar repeats after scale transformation |

A.3 Fermion-Like Identity Mapping

Core rule:

Fermion-like unit = boundary + identity + admissibility + responsibility. (A.3)

| Fermion property |

Semantic interpretation |

L0 |

L1 |

L2 |

L3 |

L4 |

Engineering use |

| Identity preservation |

The unit remains itself across operations |

feature circuit remains distinct |

skill cell keeps task scope |

DSS keeps domain identity |

knowledge object keeps universe boundary |

decision record keeps authority boundary |

Prevent semantic blur |

| Pauli-like exclusion |

Two incompatible identities cannot occupy same role |

incompatible token modes cannot both be chosen |

one artifact cannot be both draft and verified |

one agent cannot act as both writer and auditor without role separation |

one object cannot belong to conflicting universes without marking conflict |

one judgment cannot be both final and unresolved |

Prevent status leakage |

| Spin / orientation |

Internal stance or phase orientation |

tone / semantic direction |

skill role orientation |

specialist perspective |

knowledge perspective |

governance stance |

Track how the unit is oriented |

| Mass / inertia |

Resistance to change |

strong local attractor |

skill activation cost |

domain switching cost |

object revision cost |

institutional review cost |

Prevent overreaction |

| Boundary condition |

Defines admissible state |

grammar / context constraint |

input/output artifact contract |

domain rule and tool boundary |

maturity criteria |

governance protocol |

Make responsibility explicit |

Useful engineering formulation:

Skill_i = {Scope_i, Input_i, Output_i, Entry_i, Exit_i, Failure_i, Trace_i}. (A.4)

A skill without this structure is not yet fermion-like. It is only a role label.

A.4 Boson-Like Interaction Mapping

Core rule:

Boson-like signal = typed mediator + scope + decay + eligible receivers + effect. (A.5)

| Boson-like type |

Physics role |

Semantic runtime role |

Typical emission condition |

Typical receiver |

Engineering use |

| Photon-like |

Long-range observable interaction |

completion event, citation, status, KPI, dashboard signal |

artifact completed, source cited, state changed |

many downstream cells |

Synchronization and observability |

| Gluon-like |

Strong local binding |

artifact contract, schema binding, ontology binding |

fragments must become one object |

artifact builder, knowledge binder |

Prevent raw fragment escape |

| W/Z-like |

Short-range identity-changing transition |

verification gate, escalation gate, maturity transition |

draft wants to become verified; local finding wants to become decision |

validator, reviewer, governance layer |

Control status transformation |

| Higgs-like background |

Gives mass / inertia through field interaction |

policy, authority, risk, latency, cost threshold |

always present as environment |

all runtime units |

Set activation energy and friction |

| Gravity-like trace |

Long-range curvature from accumulated mass/history |

precedent, trust, residual debt, memory bias |

repeated use, failure, success, unresolved gap |

router, reviewer, retriever |

Historical curvature of future decisions |

Minimal schema:

SemanticBoson = {type, source, target_set, scope, wavelength, decay, effect, eligibility, audit}. (A.6)

A.5 Photon-Like Signals Across Layers

Photon-like signals make runtime state visible. They usually synchronize rather than force.

| Layer |

Photon-like semantic signal |

Example |

Engineering purpose |

| L0 Token |

delimiter cue, attention cue, special marker |

</json>, function-call marker |

Signal local structural boundary |

| L1 Skill |

artifact completion event |

evidence_bundle.completed |

Tell downstream cells an artifact exists |

| L2 Agent / DSS |

specialist status event |

finance_dss.review_done |

Coordinate domain-level workflow |

| L3 Knowledge |

citation, link, review marker |

source_verified, object_updated |

Make provenance observable |

| L4 Governance |

KPI, audit report, decision notice |

decision_approved, residual_escalated |

Synchronize institutional action |

Design rule:

Photon-like signals should inform many units but directly command few. (A.7)

A.6 Gluon-Like Binding Across Layers

| Layer |

Gluon-like binding |

Bound object |

Failure if missing |

| L0 Token |

syntax / grammar binding |

valid phrase, JSON fragment, code block |

malformed output |

| L1 Skill |

artifact contract |

ranked evidence bundle, contradiction report, code patch |

partial artifact leakage |

| L2 Agent / DSS |

domain invariant |

legal memo, financial analysis, medical triage note |

domain identity blur |

| L3 Knowledge |

mature object binding |

claim + evidence + provenance + residual + evaluation |

raw RAG hallucination |

| L4 Governance |

accountability binding |

final decision + authority + audit + residual |

unaccountable judgment |

Strong-force knowledge object:

MKO = Bind(claim, evidence, provenance, universe, residual, evaluation, update_history). (A.8)

A.7 Weak-Boson-Like Transition Gates Across Layers

Weak-boson-like gates control identity transformation.

| Transition |

Semantic meaning |

Required gate |

| token candidate → emitted token |

local selection |

decoding rule |

| partial output → skill artifact |

local closure |

exit criteria |

| draft artifact → verified artifact |

quality transition |

validator gate |

| specialist answer → governed answer |

authority transition |

PORE / expert review |

| raw object → mature knowledge object |

knowledge maturity transition |

provenance + coverage + residual test |

| local judgment → institutional decision |

responsibility transition |

governance approval |

General gate formula:

GatePass = Eligibility · EvidenceSufficiency · ValidatorPass · AuthorityPass · ResidualAcceptability. (A.10)

If GatePass = 0, the transition must be blocked, repaired, residualized, or escalated. (A.11)

A.8 Higgs-Like Background Across Layers

| Layer |

Higgs-like background |

What gains inertia? |

| L0 Token |

temperature, decoding policy, grammar constraints |

token choice |

| L1 Skill |

activation threshold, cost budget, required inputs |

skill wake-up |

| L2 Agent / DSS |

domain authority, tool permission, severity class |

specialist routing |

| L3 Knowledge |

maturity standard, citation policy, update friction |

knowledge revision |

| L4 Governance |

legal authority, audit requirement, institutional risk |

final decision |

Activation rule:

Activation_i = Signal_i − Threshold_i(Context, Policy, Cost, Risk). (A.12)

A.9 Gravity-Like Trace Across Layers

| Layer |

Trace form |

Curvature effect |

| L0 Token |

generated context |

biases next continuation |

| L1 Skill |

execution logs |

changes future skill confidence |

| L2 Agent / DSS |

specialist performance history |

affects routing and trust |

| L3 Knowledge |

provenance and update history |

affects retrieval weight |

| L4 Governance |

precedent and residual debt |

affects future review threshold |

Trace dynamics:

TraceWeight_i(k+1) = Decay · TraceWeight_i(k) + EventImpact_i(k). (A.14)

Residual debt:

ResidualDebt_j(k+1) = ResidualDebt_j(k) + UnresolvedResidual_j(k) − ResolvedResidual_j(k). (A.15)

A.10 Wavelength Mapping

| Wavelength |

Semantic scope |

Typical signal |

Correct controller |

Failure if mismatched |

| Long wave |

purpose, mission, policy, value frame |

“be accurate,” “protect user,” “serve auditability” |

system prompt, governance rule, PORE |

Too vague for local syntax |

| Medium wave |

workflow phase, domain, task regime |

“now verify,” “finance universe active” |

router, DSS selector, phase controller |

Wrong specialist or wrong phase |

| Short wave |

local artifact deficit |

missing citation, contradiction, invalid assumption |

verifier, contradiction checker, repair skill |

Local error remains unresolved |

| Ultra-short wave |

token / syntax / delimiter |

brace, comma, schema token, function marker |

constrained decoding, parser, grammar checker |

Broken JSON, broken code, malformed output |

Control fit:

ControlFit = Match(Wavelength_problem, Wavelength_controller). (A.19)

A.11 Gauge Invariance Mapping

Gauge invariance means the governed meaning should remain stable under equivalent local representation changes.

| Gauge transformation |

AI equivalent |

What should remain invariant? |

Test |

| Change of local phase |

prompt paraphrase |

core judgment |

paraphrase robustness test |

| Change of coordinate frame |

schema relabeling |

object meaning |

schema-label invariance test |

| Change of path representation |

tool order variation |

governed answer |

tool-order test |

| Change of local observer |

different specialist framing |

accepted residual-aware conclusion |

multi-frame review |

| Change of module name |

role rename |

function and responsibility |

module-name perturbation test |

Gauge test:

Same object + equivalent projection frame → same governed answer. (A.20)

Gauge error:

GaugeError = Distance(G(A|F1), G(A|F2)) under F1 ≡ F2. (A.21)

If:

GaugeError > ε, then runtime is frame-fragile. (A.22)

Gauge fragility usually means the system is over-dependent on wording, role labels, tool order, or local framing.

A.12 Particle / Force Mapping by Engineering Object

| Engineering object |

Fermion-like aspect |

Boson-like interaction |

Binding force |

Transition gate |

Trace / gravity |

| Token |

selected token identity |

attention cue |

grammar |

decoding selection |

context |

| Skill cell |

bounded transformation |

wake / deficit signal |

artifact contract |

exit criteria |

execution log |

| Agent |

role + memory + tool boundary |

handoff / coordination signal |

workflow invariant |

delegation / escalation |

agent performance history |

| DSS |

domain-specific identity |

cross-DSS evidence / conflict signals |

domain ontology |

expert review |

specialist precedent |

| Knowledge object |

universe-bound claim object |

citation / review / update signals |

claim-evidence-provenance binding |

maturity gate |

update history |

| Governed decision |

accountable judgment |

review / escalation / residual signals |

authority + audit binding |

approval gate |

institutional precedent |

This table is often the quickest way to explain the framework to engineers.

A.13 Common Failure Modes by Quantum Analogy

| Failure |

Quantum-structural analogy |

Semantic runtime meaning |

Engineering fix |

| Identity blur |

fermion boundary failure |

skill or agent acts outside scope |

strengthen contracts and eligibility |

| Raw snippet becomes answer |

confinement failure |

unbound fragment escapes |

mature object binding |

| Draft treated as final |

weak-gate failure |

identity transition bypassed |

explicit verification gate |

| Too many modules wake |

Higgs / threshold failure |

activation energy too low |

increase thresholds, scope signals |

| Important skill sleeps |

insufficient signal coupling |

deficit not represented |

typed deficit boson |

| Same facts, different wording, different answer |

gauge failure |

frame fragility |

gauge invariance tests |

| Old bad memory dominates |

gravity over-curvature |

stale trace bends routing too strongly |

decay, freshness, residual review |

| Local syntax controlled by vague instruction |

wavelength mismatch |

long-wave prompt used for short-wave problem |

parser / constrained decoder |

| Governance decided by local validator |

wavelength mismatch |

short-wave tool used for long-wave decision |

PORE / review protocol |

| Specialist sounds expert but adds no value |

expert theater |

complexity bypasses baseline |

expert superiority review |

A.14 The Self-Similar Closure Stack

The same closure structure repeats across levels:

| Level |

Field |

Projection |

Identity |

Interaction |

Closure |

Residual |

| L0 Token |

next-token distribution |

context / attention |

selected token |

attention cues |

emitted token |

entropy |

| L1 Skill |

task transformation space |

decomposition |

skill cell |

semantic bosons |

artifact |

failure marker |

| L2 DSS |

domain problem space |

active universe |

specialist system |

handoff / conflict signals |

specialist answer |

boundary risk |

| L3 Knowledge |

raw source space |

indexing / schema |

mature object |

citation / review signals |

governed knowledge |

coverage gap |

| L4 Governance |

competing judgments |

PORE frame |

decision record |

expert review |

accountable decision |

residual debt |

General stack equation:

Closure_L(n) becomes Object_L(n+1). (A.23)

Then the same grammar repeats at the next level.

This is the framework’s fractal / self-similar core.

A.15 Minimal Engineer’s Cheat Sheet

When designing a new agentic AI system, ask:

1. Field

What is the possibility space?

2. Projection

What prompt, schema, retrieval path, toolchain, or frame makes structure visible?

3. Fermion

What units must preserve identity?

4. Boson

What signals mediate interaction?

5. Photon

What events should become broadly observable?

6. Gluon

What fragments must be bound before they can escape?

7. Weak gate

What status transitions require validation?

8. Higgs

What thresholds prevent overreaction?

9. Gravity

What history should bend future routing?

10. Gauge

What must remain invariant under equivalent frame changes?

11. Wavelength

Is the controller operating at the correct semantic scale?

12. Residual

What remains unresolved, and where does it go?

A.16 One-Page Summary Formula

The entire appendix can be compressed as follows:

SemanticRuntime = Field + Fermions + Bosons + Gauge + Trace + Residual + Governance. (A.24)

Expanded:

SemanticRuntime = PossibilitySpace + IdentityUnits + InteractionSignals + InvarianceRules + HistoryCurvature + UnresolvedRemainders + ClosureAuthority. (A.25)

And the operational loop is:

Observe → Project → Bind → Interact → Close → Trace → Residualize → Govern → Update. (A.26)

This is the intended engineering reading of Semantic Gauge Grammar for Agentic AI.